Another eventful week in AI has passed. On one side, we see relentless innovation in frontier models, chips, kernels, architecture, training, fine-tuning, inference optimizations, agentic workflows, automation, verifiability, and context engineering and on the other side, we see thought leaders questioning whether LLM scaling is approaching a dead end for AGI.

And in the middle of this, three big questions are dominating social media:

1. What happens to AI app start-ups as LLMs keep absorbing more capabilities?

With OpenAI, Anthropic, Google, and others releasing Security Agents, Enterprise Agents, Agentic SDKs, and end-to-end orchestration platforms, founders worry: Is there space left for an AI app company?

Yes, if you build something the foundation model cannot. The winning products will be those that capture:

- customer-specific workflows

- domain knowledge

- proprietary datasets

- context engineering

- human-in-the-loop feedback

- integrations and inferences unique to the domain

This is precisely what happened in the cloud-native era. AWS, GCP, and Azure (and other public cloud providers) didn’t kill innovation — they powered it.

2. Should developers rely on prompts to generate code?

As a starting point, absolutely yes, use prompts to bootstrap the stack you know, then iterate and refine it manually. But don’t stop at vibe coding. Move to:

- your own agent workflows

- .md-driven planning

- modular development

- repeatable build steps

Agents will increasingly become part of the development loop, not the entire loop.

3. Will no-code super apps replace the domain-specific AI Apps?

Large Language Models are aiming to be adopted for real-life applications and core lifestyle and productivity services (Alibaba unveiled the Qwen App on Nov 17th, 2025). Platforms like X’s upcoming no-code deployment flow shows where things are heading.

I believe niche apps, device-native experiences, hybrid cloud-model apps, and vertical-first products will continue to thrive. There is still tremendous value to build in the AI app, infrastructure, and contextual intelligence layers.

So what should builders do now?

Start building, the field is far from saturated. Focus on:

- identifying a real business problem

- using prompts + agents to accelerate early development

- building your own project space with agent rules

- capturing context that only you can bring

- fine-tuning your datasets

- creating knowledge graphs

- designing better inference methods

- building unique integration touchpoints

- adding domain intelligence beyond the base model

The application layer (its data and behavior) will always matter. There may not be room for millions of apps like in the early iOS/Android era, but there will be room for high-value, deeply contextual applications.

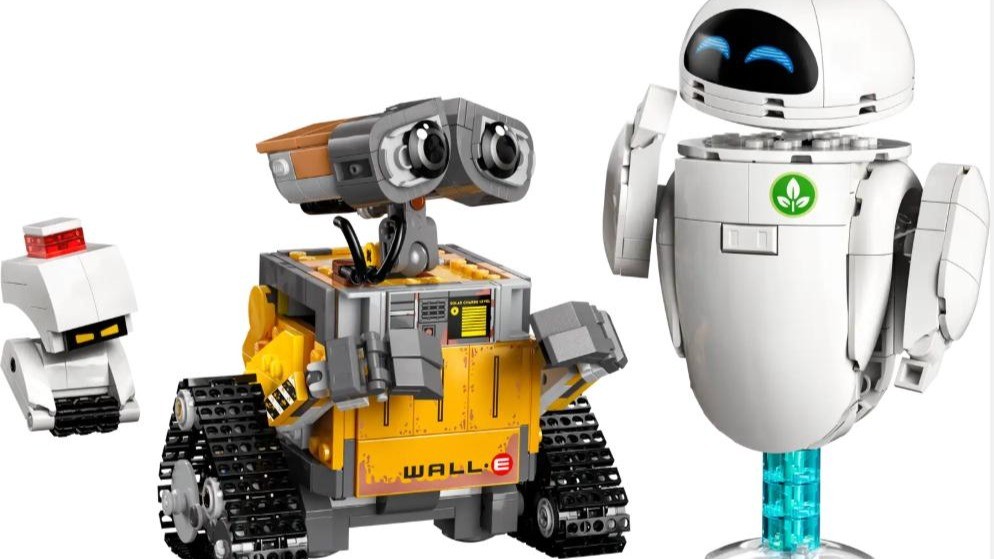

Remembering the Wall-E quote: “I don’t want to survive. I want to live.”

In the same spirit, I don’t want to be a spectator in the AI revolution. I want to be a builder, contributor, and active participant.