Over the years, enterprise architecture has undergone a remarkable transformation — from tightly coupled monolithic systems to service-oriented, microservice, event-driven, and serverless architectures.

We are entering a new phase — one where AI systems, including large language models (LLMs), retrieval-augmented generation (RAG), and autonomous agents, are reshaping how enterprises build, reason, and scale systems.

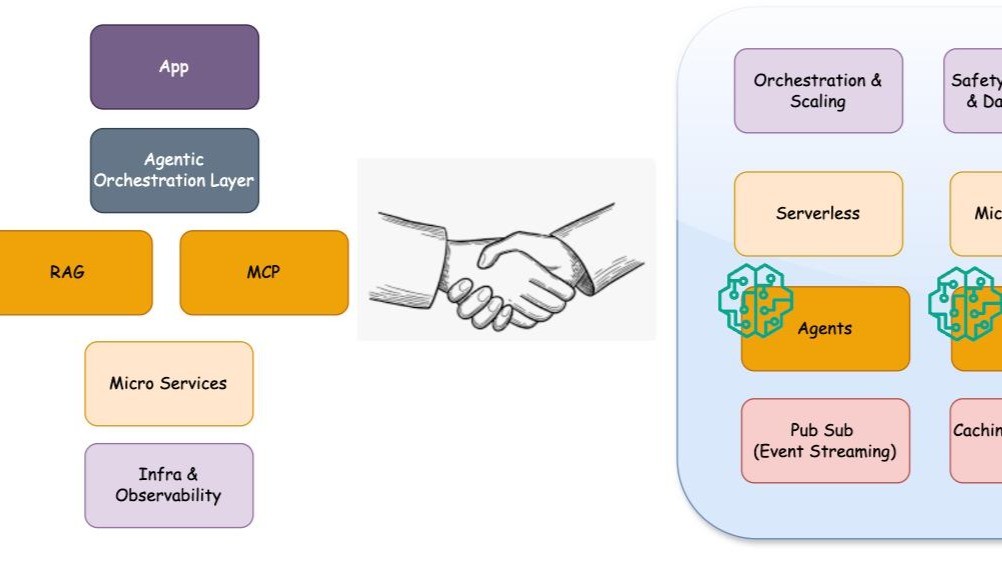

A modern enterprise must now balance deterministic microservices with adaptive AI components. A well-architected environment integrates the right mix of microservices, serverless workflows, event-driven logic, and, where appropriate, even monolithic services that continue to serve specialised purposes efficiently.

The New AI Forces Transforming Enterprise Architecture

Recent advances are pushing the boundaries of traditional systems:

- LLMs and Agents – act as reasoning layers capable of understanding, planning, and decision-making.

- RAG (Retrieval-Augmented Generation) – grounds AI responses in domain-specific, up-to-date data.

- AI Workflows (LangChain/LangGraph, LlamaIndex, DSPy, CrewAI, Semantic Kernel) – support modular orchestration and context-driven reasoning.

- Agent Protocols (A2A, A2P) - support Interaction between Agents and sub systems like Subscription and payment models.

- MCP (Model Context Protocol) – enables dynamic discovery and secure integration with tools, APIs, and knowledge sources.

- Fine-Tuning Frameworks (Unsloth, Mistral AI) – allow domain-specific training with reinforcement learning.

The convergence of these elements demands that existing architectures be re-evaluated and extended to integrate cognitive components based on business maturity and readiness.

1) The Foundation: Microservice Fabric in Enterprise Systems

At the core of a scalable enterprise lies a strong microservice fabric built on cloud-native principles:

- Orchestration: Kubernetes, Istio, Terraform, Jenkins pipelines.

- Integration: API-centric connectivity with Subscription, Billing, OSS/BSS, and Partner systems.

- Boundaries: Clear domain demarcation and redundancy flows.

- Security: Deterministic workflows and strict access controls.

- Persistence & Caching: Milvus, Redis, MongoDB, RDS.

- Observability: Prometheus, Grafana, Elastic Stack.

That’s more scalable, maintainable, and language-agnostic and can support different domains.

2) Extending the Architecture: Standalone AI and Hosted AI Services

Adding AI components to a microservice fabric can follow two patterns — Standalone AI Applications or Hosted AI Services.

These run as dedicated services or serverless functions that:

- Fetch and clean data from enterprise systems.

- Feed data into LLMs for fine-tuning or inference.

- Generate, evaluate, and persist results.

Deployment Options

- Self-hosting open models (Llama, Mistral, DeepSeek, Qwen, GPT-OSS).

- Managed AI suites (AWS Bedrock, Google Gemini Enterprise, Azure OpenAI).

Operational Considerations

- Define evaluation metrics and benchmarks (Eval & MLOps integration).

- Use hybrid mechanisms for agent interaction (local + remote models).

- Outsource dataset preparation or weight tuning when needed.

Media & Entertainment Use Cases

- Personalized recommendations

- Lexical and semantic search

- Metadata enrichment and auto-publishing

- Video moderation and content tagging

At this stage, enterprises effectively operate a cognitive microservice mesh, composed of:

- Semantic Services: Embedding, retrieval, and grounding

- LLM Reasoning Nodes: Generation, summarization, planning

- Workflow Orchestrators: Kubernetes jobs, Terraform pipelines

- Observability Layers: Prometheus, Grafana, Elastic

- Persistence & Caching: Milvus, Redis, MongoDB, RDS

This architecture bridges deterministic microservices with adaptive reasoning services — enabling systems that can understand, decide, and act dynamically.

3) Enterprise Architecture with Agents + RAG

RAG (Retrieval-Augmented Generation) is designed to dynamically augment an LLM’s context at inference time — typically with unstructured or semi-structured knowledge.

- It retrieves relevant documents, facts, or embeddings from a vector store (like Pinecone, FAISS, Milvus, or Elastic Vector Search).

- These retrieved chunks are injected into the model’s prompt context.

- The model uses this retrieved evidence to answer queries and provide accurate, up-to-date context.

A hybrid approach is recommended, where domain-specific adapters will be maintained within the microservices, and the Core RAG elements will be maintained within a separate Service.

4) Enterprise Architecture with AI Workflows

The AI Workflow tools or frameworks provide runtime flexibility and semantic adaptability.

- Option to select a service or API.

- Experiment with different LLMs and strategies at runtime.

- Memory and Context Management

- Evaluation and Feedback Loop.

In a mature enterprise system, these frameworks augment and support, or enable, dynamic, human-like orchestration without redeploying backend logic. They will be very much useful for:

- Experimentation and Rapid Prototyping.

- Evaluation, Testing and Fine Tuning.

- Workflow that evolves at Runtime.

- Cross Model Orchestration.

5) Enterprise Architecture with MCP

As the system grows and is deployed in new regions or dynamically needs to support new services, third-party modules & data sources, and vendor LLMs, the MCP plays a critical role in bridging the deterministic enterprise world and the open, dynamic AI ecosystem.

The real-world use cases are:

- Deciding which AI Service, Partner, or SaaS based tool.

- In the Mult-Tenant platform, selecting tools specific to each Tenant.

- Cross Model Reasoning.

- More importantly, Security and Governance Alignment for Enterprise Design.

Final Thoughts

The Path Forward: From Automation to Intelligence

The evolution from monolithic to agentic architectures reflects a larger shift — from systems that execute instructions to systems that reason, adapt, and collaborate.

Modern enterprise architecture will increasingly blend:

- Deterministic logic (microservices) for reliability

- Orchestration (K8s, Terraform) for resilience

- Cognitive reasoning (LLMs and agents) for adaptability

- Dynamic retrieval (RAG) for context awareness

- Prototyping & Chaining (AI Workflows) for faster GTM.

- Secure Dynamic Integration (MCP) for a dynamic Agent Ecosystem.

The future enterprise is not just automated — it’s intelligent, context-aware, and self-optimising.

As enterprises embrace AI, the goal is not to replace existing microservices but to extend them with reasoning and contextual intelligence. The most successful architectures will be those that merge the discipline of software engineering with AI Systems.

Note: I will write a follow-up article covering the feedback of this one and the impact of recently announced off-the-shelf AI Enterprise systems like Google Gemini, AWS Quick Suite and others.

#EnterpriseAI #AgenticAI #LLM #RAG #Agenticworkflows #AgenticProtocols #Agents #AI #ML #OpenAI #Gemini #Claude #Google #GPT